Fun With Photoscan.

A year ago I started playing more with Agisoft Photoscan software, at the behest of a very respected VFX artist. It is now a professional tool in my kit, but also a hobby. On the hobby side it is always fun to do things guerrilla style on purpose — to learn to what extent you can push the software. Take photos, throw them in and eventually read what scant documentation exists. (Much more fun to kick the tires, and answer questions after the fact). Typically it is late night when these experiments take place, so I grab the first thing I see, and take a picture — well several of them.

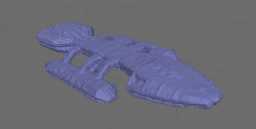

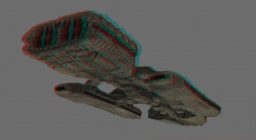

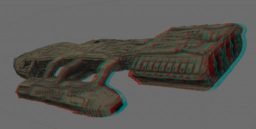

This tiny toy of the Battlestar Galactica from Konami sits on my shelf, and is only about 4 inches long. I like the photo in Black and White, because when I first saw it in 1978, My father only had a Black and White TV, and most magazines were not full-color. It is incredibly detailed, but does NOT come with a miniature John Dykstra model, or tiny c-stands ( hat tip to Paul for suggesting I build some, but little time to bend paper clips ). However, in 2013, I have camera technology in my pocket, and visual effects a button click away. Photogrammetry time!

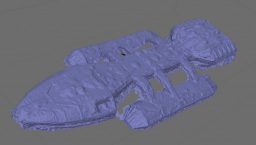

I tried something different with this one: instead of walk all around the object, I decided to rotate it in its light source while capturing. This is a standard technique for me when I take photos of actors on set, but not really tried with this photogrammetry software. I was told it would not work. After about a half hour of flash photography with a Nikon zoom/macro lens and some processing, I got a reasonable result. Once the texture was averaged from all the photos. It really took shape.

The single-light source images average to color and ambient occlusion texture.

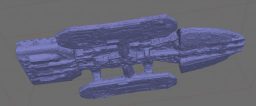

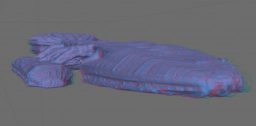

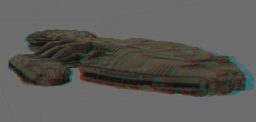

Not a bad result for a macro focus, shallow depth of field, low-light situation, but Photoscan would not reconstruct the bottom and top at the same time — either the lighting or number of photos was throwing it off. Several other attempts over the year produced similar results — until I put all 200 photos taken in the last year together in the same project. Photos from a Nikon SLR under flash, under single light source conditions, as well as tight macro shots from an iPad Air actually produced results!

I’d recommend some 3D glasses to see half of these.

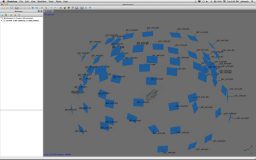

The initial solve took a few minutes to align automatically, and 15 minutes for a mid-res build of the geometry. The ultra-res geometry seen here is 500K poly, and took 10 hours to compute. It is my experience with better, cleaner source that this time can be reduced, but higher density solves will just take time. It was necessary to mask out all the unwanted parts of the image, or Photoscan would not solve the perspective correctly. I did this in Photoshop, and saved an alpha channel — though it would be faster to save out individual mask images and import them because an alpha conversion requires a change of alpha to Photoscan masks one at a time. The final texture from the flash photography averaged out all the direct light sources when all the photos were projected and combined. The 200 photo version similarly reduced the texture to color and ambient occlusion, but not as well because of a larger number of photos not properly white-balanced. This was an on-the-fly experiment about capture, not color.

I have seen better results from photoscan on other projects, but, I am encouraged by these results because it is difficult to maintain focus in macro photography. You will note a slight bend in the outrigger landing bays. This is accurate to the plastic miniature, which seems to have some deformation from the casting process.

Overall I am pleased with the results of this test. I now know that the difficulty in this case is not in the lighting, but the number of photos required and their quality. More overlap in the photography session is necessary, flash photography produces better results, and an approximate 22° rotation around the subject per photo is required. With proper preparation I can probably get better results. Maybe on another holiday.

Ola JB!

Really cool!

Thanks

Tom